Ph.D. in Robotics · Control Engineer

Jan Smisek

Translating advanced control theory into real-world systems—from force-feedback robotics on the International Space Station to laser communication terminals linking satellites in orbit.

Profile

With over a decade of experience in technical leadership, I have focused on developing high-performance systems in challenging environments. Currently, I lead the Space and Defense technology group at Transcelestial in Singapore, overseeing the development of laser-based communication systems for aerospace and defense applications.

Previously, my work at the European Space Agency (ESA) Telerobotics & Haptics Lab involved architecting control systems for telerobotics. This included contributions to the Haptics-2 experiment, which facilitated the first force-feedback handshake between the International Space Station and Earth.

Prior to that, I led the robotics development at Speedcargo, delivering AI-powered logistics solutions for aviation. I hold a Ph.D. in Robotics from Delft University of Technology, with a research focus on haptic shared control and human-machine interaction.

Project Portfolio

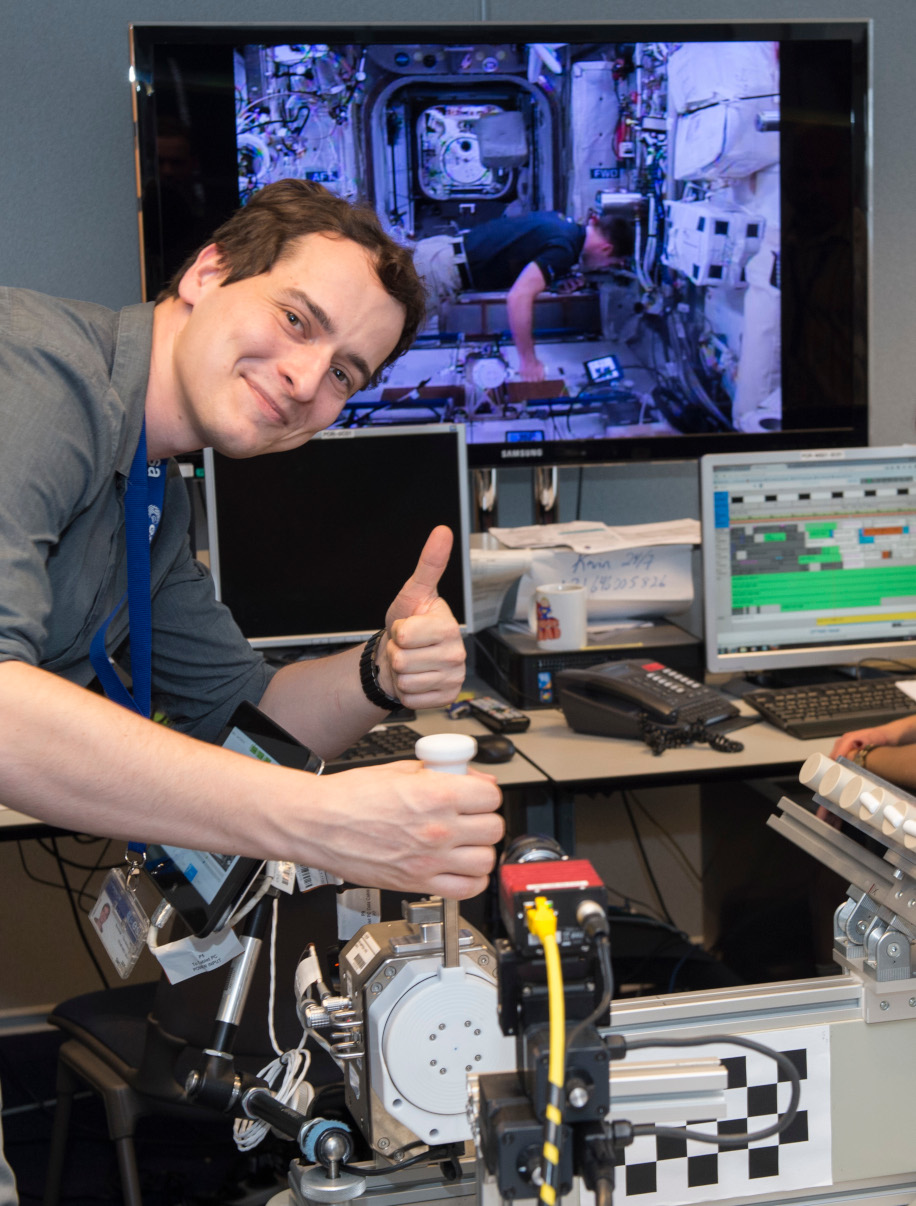

ESA INTERACT

View MissionDemonstration of end-to-end real-time rover control from the International Space Station.

6G STARLAB MISSION

View ProjectSpace-qualified laser terminal for Europe's 6G StarLab mission.

INTERSATELLITE LINK

View ProjectHigh-speed inter-satellite laser communications with ST Engineering.

ESA HAPTICS-1

View MissionFirst high-performance haptic joystick on the International Space Station.

ESA HAPTICS-2

View MissionTeleoperation with force feedback over geostationary satellite links.

INTERACT ROVER

View DetailsMobile platform development for high-latency lunar-analogue operations.

HAPTIC SHARED CONTROL

View ResearchSystematic framework for improving human-machine interaction in teleoperation.

Experience

Transcelestial

Head of Space & Defense

Responsible for strategy and engineering execution within the space and defense verticals, managing a portfolio of R&D contracts. The team develops laser communication terminals, including a space-to-ground system deployed into Low Earth Orbit in 2023. My role covers the full development lifecycle, from architectural design using ROS2 and Simulink to HIL/SIL testing and mission operations.

Speedcargo

Lead Robotics Engineer

Led the engineering team in the development of an AI-powered robotic aviation cargo packaging system. By implementing ML-driven perception and real-time grasp planning in ROS, the system achieved manipulation speeds comparable to human operators, handling a wide variety of package types in deployment at Changi Airport.

European Space Agency

Robotics & Control Engineer

Contributed to the development of real-time control software for spaceflight experiments (Interact, Haptics-1 & 2) deployed on the ISS. Work included the implementation of force/torque control algorithms for commercial arms (KUKA) and autonomous navigation software for rover platforms, facilitating teleoperation tasks with significant time delays.

Selected Publications

Haptic guidance on demand: A grip-force based scheduling of guidance forces

IEEE Transactions on Haptics, 2018

Smisek, J., Mugge, W., Smeets, J.B.J., van Paassen, M.M., Schiele, A.

Neuromuscular-System-Based Tuning of a Haptic Shared Control Interface

IEEE Transactions on Human-Machine Systems, 2017

Smisek, J., Sunil, E., van Paassen, M.M., Abbink, D., Mulder, M.

3D with Kinect: High Accuracy Depth Measurement

IEEE International Conference on Computer Vision, 2011

Smisek, J., Jancosek, M., Pajdla, T.